What is Cloud Backup, BaaS, RTO, RPO, RCO ?

One of the subject that keep my eyes open in the digital transformation world is the cloud and its unbelievable capabilities to provide new thinking and new way of using compute resources, we are now speaking of workload, coding and mobility, but a point that will never change is that we have to evaluate data resiliently in the cloud, not a small part and certainly one of the most complex to treat.

In this article I don’t want to disturb you with the new government regulations on data sovereignty threaten to complicate the delivery model that has made cloud computing attractive, presenting new concerns for companies with operations in multiple countries.

Promise only engage those who believe them, hum…. don’t want to sarcastic too, there’s a big promise of simplification and standardization with implementation of Cloud technologies.

But the United States-European Union “Safe Harbor” agreement made most of the discussions, and the recent localization law in Russia mandates that personal data on Russian citizens must be stored in databases physically located within the country itself.

ok ok ok ok, will say Leo Getz (Joe Pesci), let’s start the article what is Cloud backup ?

Cloud Backup BaaS (Backup as a Service) is to backup and restore data by outsourcing data backup and recovery to a public managed service provider that is running a Cloud Backup solution, or is to rely on cloud technologies software or appliances ran by your own.

So backup, i would expect discussing of “Restore”, but that permit to introduce two well known indicators, welcome to RTO, RPO.

RTO, Recovery time objective definition:

The recovery time objective (RTO) is the targeted duration of time and a service level within which a business process must be restored after a disaster (or disruption) in order to avoid unacceptable consequences associated with a break in business continuity.[1]

It can include the time for trying to fix the problem without a recovery, the recovery itself, testing, and the communication to the users. Decision time for users representative is not included.

The business continuity timeline usually runs parallel with an incident management timeline and may start at the same, or different, points.

In accepted business continuity planning methodology, the RTO is established during the Business Impact Analysis (BIA) by the owner of a process (usually in conjunction with the business continuity planner).

RPO, Recovery point objective definition:

A recovery point objective, or “RPO” is the maximum targeted period in which data might be lost from an IT service due to a major incident.

The RPO gives systems designers a limit to work to.

For instance, if the RPO is set to four hours, then in practice, off-site mirrored backups must be continuously maintained – a daily off-site backup on tape will not suffice.

Care must be taken to avoid two common mistakes around the use and definition of RPO.

Firstly, business continuity staff use business impact analysis to determine RPO for each service – RPO is not determined by the existent backup regime.

Secondly, when any level of preparation of off-site data is required, rather than at the time the backups are offsited, the period during which data is lost very often starts near the time of the beginning of the work to prepare backups which are eventually offsited.

Nothing new at this stage for people coming from the IT and digital enterprise world, but I would now introduce the RCO.

RCO, Recovery Consistency definition .

Following the definitions for RPO and RTO, RCO defines a measurement of the consistency of distributed business data within interlinked systems after a disaster incident.

Similar terms used in this context are “Recovery Consistency Characteristics” (RCC) and “recovery object granularity” (ROG).

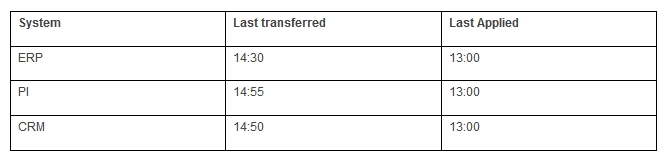

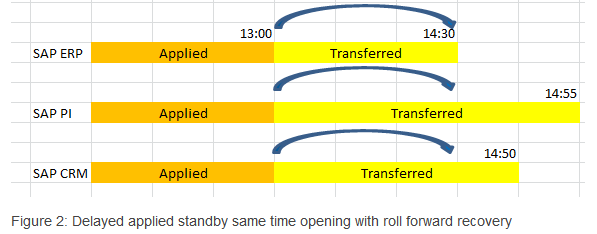

In this RCO example there are three systems ERP,CRM and PI. Configured delay time is two hours. Disaster occurred at 15:00 o’clock.

Here the lowest last transferred system is ERP.

So all systems can be restored until 14:30 and can be opened at 14:30 using oracle point in time recovery commands.

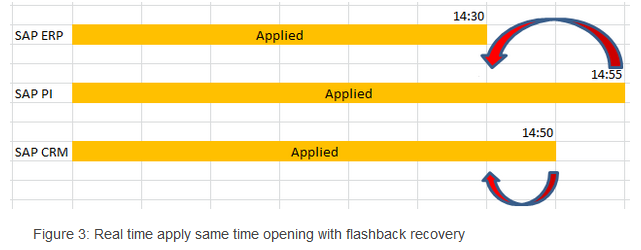

In this configuration , our systems in previous example would behave like in Figure 3.

The oldest data time would be in SAP ERP system at 14:30. Since other two systems data are ahead of this system they needed to be rewind same point in time with ERP. This can be achieved by flashback command.

The oldest data time would be in SAP ERP system at 14:30.

Since other two systems data are ahead of this system they needed to be rewind same point in time with ERP, Hey here we got our RCO !

Technically:

Dataguard must be configured with LGWR method and standby redologs.

Flashback must be enabled at standby side. Enough disk space must be provided according to retention time and database log generation rate.

Now that we know what is a Restore, we could start to categorized our data, and of course we will introduce the Cloud backup and restore concept.

What is Data Categorization, (classification) ?

Data and information classification refers to the policies and procedures by which stored data is categorized so the information can be accessed, updated, protected, recovered and managed more efficiently in accordance with specific application requirements.

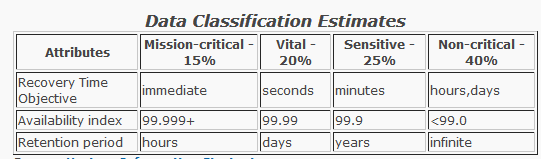

Data, information and applications can be classified in four primary ways based on criticality, according to their RTO (recovery time objective) and other measures as follows:

Classes of Data

Classifying data is becoming a critical IT activity for the purposes of implementing the optimal data solution to store and protect data throughout its lifetime.

Developing a data classification methodology for a business involves establishing criteria for classes of data or application based on its value to the business.

Four distinct levels of classifying data or applications are commonly used: mission-critical data, vital data, sensitive data and non-critical data. Determining these levels takes some cooperative effort within the business and when completed, enables the most cost-effective storage and data protection solutions to be implemented. Data classification levels also identify which backup and recovery or business resumption solution is best suited for each level to meet the RPO (Recovery Point Objective) and RTO (Recovery Time Objective) requirements. While very important, RTO & RPO are not the only parameters used to classify data. Other considerations include availability, length of data retention, service levels and performance requirements, and overall costs.

Here is a summary of each of the four data classification categories with a description of the attributes found in each:

Mission-critical data

Mission-critical data is used in the key business processes or customer facing applications and can account for as much as 15 percent of all data stored online and typically has very fast response time requirements. Mission-critical applications have a RTO (Recovery Time Objective)of one-minute or less, to immediately resume business after the disruption. Losing access to mission-critical data means a rapid loss of revenue, potential loss of customers and places the survival of the business at risk. Mirroring protects against device failures but not from data corruption, intrusion, human or software errors. Therefore, all mission-critical data that is mirrored should also have point-in-time copies that enable full recovery prior to the point in time of the corruption event. Mission-critical data is usually classified as company secret and some applications may be a candidate for encryption. Mission-critical data is normally backed up using integrated virtual tape libraries (disk arrays and tape libraries combined)or SATA-based disk arrays. Maintaining mirrored copies for non mission-critical data is extremely expensive.

Vital data

Accounts for about 20 percent of all data stored online; however, vital data doesn’t require instantaneous recovery for the business to remain in operation. Vital data may be classified as company secret. Data recovery times, the RTO, ranging from a few minutes to an hour or more, are acceptable and vital data is normally backed up using integrated virtual tape libraries or SATA-based disk arrays. Mirroring is not normally required for vital data as techniques such as point-in-time copy, snapshot copy, CDP (Continuous Data Protection) and de-duplication are sufficient to meet the application’s RTO while avoiding the additional hardware costs associated with disk mirroring.

Sensitive data

Sensitive data accounts for about 25 percent of all data stored online. Recovery times the RTO, can take from several minutes to several hours without causing major operational or business impact. With sensitive data, alternative sources exist for accessing or reconstructing the data in case of data loss. The growing popularity of SATA-based disk subsystems for backup now provides viable and cost-effective technology options along with tape, which has historically been the primary choice for backup and recovery.

Non-critical data

Non-critical data represents approximately 40 percent of all data stored online making it the largest classification category. Lost, corrupted or damaged non-critical data can be reconstructed with minimal effort, and acceptable recovery times can range from hours to several days since this data is not essential for business survival. Non-critical data may suddenly become valuable based on unknown circumstances however giving momentum to extending the useful lifecycle of data significantly. E-mail archives, legal records, medical information, scientific data, financial transactions, security data and fixed content often fit this profile. Most non-critical data is backed up to lower-cost storage solutions with tape being the most popular choice.

The second aspect to introduce is that the data exists in one of three basic states: at rest, in process, and in transit.

All three states require unique technical solutions for data classification, but the applied principles of data classification should be the same for each. Data that is classified as confidential needs to stay confidential when at rest, in process, and in transit.

Data can also be either structured or unstructured.

Typical classification processes for the structured data found in databases and spreadsheets are less complex and time-consuming to manage than those for unstructured data such as documents, source code, and email.

Generally, organizations will have more unstructured data than structured data.

Regardless of whether data is structured or unstructured, it is important for organizations to manage data sensitivity.

When properly implemented, data classification helps ensure that sensitive or confidential data assets are managed with greater oversight than data assets that are considered public or free to distribute.

Data at rest in IT means inactive data that is stored physically in any digital form (e.g. databases, data warehouses, spreadsheets, archives, tapes, off-site backups, mobile devices etc.).

Data in use is an IT term referring to active data which is stored in a non-persistent digital state typically in computer random access memory (RAM), CPU caches, or CPU registers

Data in transit is defined into two categories, information that flows over the public or untrusted network such as the internet and data which flows in the confines of a private network such as a corporate or enterprise Local Area Network (LAN).

Today and due to the spiraling challenge of managing data growth, Backup in the cloud for classified data in OPEX model,or pay as you go, is one of the major response, of course these solutions type can be implemented in a Cloud hybrid solution or SDDC new implementation, but that’s again another topic.

Checklist to Evaluate Cloud Backup and Data Protection Solutions

This is an exhaustive list of product features that will permit you to evaluate the right product, here we are addressing, Enterprise, SMB, Service Providers, Cloud backup solution, BaaS.

- What are the supported environment :Public/Private Cloud, On Premise, Offer Hybrid Deployment ?

- What Offerings & SLAs are provided: Backup as a Service, DR as a Service, Datacenter Reliability, SLA Committed ?

- What are the Backup Features: Backup from Disk, Backup from NFS Storage, VM Backups, Storage Snapshots, Supported Native Tape Support, Agentless Backup, Built-in WAN Acceleration ?

- What are the Search & Restore capabilities: File Level Recovery, Microsoft Active Directory, Microsoft Exchange, Microsoft SQL Server, Microsoft Sharepoint, Oracle Database, Self-Service Recovery Delegation, System Center Data Protection Manager ?

- What are the Automation & Availability possibilities: Scheduling Data Retention, Data Compression, Network Throttling, Incremental Data Backup, End to End Encryption Encryption Type, Multi-tentant Monitor & Report, Preset Alarms & Realtime Dashboard, Customizable Reporting ?

- What are the Failover solutions: Replica Rollover, Planned Failovers, VM Backup, Recoverability Test ?

- What are the Backup Host type supported: Windows, MAC OS, Linux, Android & IOS, Granular Backups (Files/Folders), Geo-Location visibility, Remote Wipe,

- Is Cloud to Cloud Backup supported, MS Office365, G Suite, Salesforce ?

- Is Virtualised Environment can be protected, VMWare Virtualization Platforms, Hyper-V Virtualization Platform, Backup for Xen-Server, VMX & VMDK Recovery

Full VM Recovery etc…. ? - What are the other Other Capabilities: File Manager, VMWare Hosts & Datastores, Migrations Task , Automation Centralized Management ?

- What are the options of support : Support Phone, Support Online, Trouble Tickets, Chat Support, Customer Trainings ?

This is my selected list of product that will be the most accurate to permit a cloud backup implementation following several KPIs.

Veeam Backup & Replication Overview.

Veeam Backup & Replication is a powerful, easy-to-use and affordable backup and Availability solution.

It provides fast, flexible and reliable recovery of virtualized applications and data, bringing VM (virtual machine) backup and replication together in a single software solution. Veeam Backup & Replication delivers award-winning support for VMware vSphere and Microsoft Hyper-V virtual environments.

Backup: Veeam Backup & Replication provides fast and reliable image-based backup for vSphere and Hyper-V virtual environments — all without the use of agents — giving you the ability to achieve shorter backup windows and reduce backup and storage costs.

Recovery: Veeam Backup & Replication delivers lightning-fast, reliable restore for individual files, entire VMs and application items — ensuring you have confidence in virtually every recovery scenario — giving you the ability to attain low recovery time objectives of < 15 minutes.

Replication: Deliver advanced, imaged-based VM replication and streamlined disaster recovery — ensuring availability of your mission-critical applications. Veeam gives you the ability to achieve RTOs of < 15 minutes for ALL applications.

Asigra Backup & Recovery Solution Overview.

Asigra is providing unsurpassed reliability, security, manageability, and affordability in data recovery for enterprises worldwide. Explore how we can help your organization conquer the complexity of recovering data, from the core to beyond the edge.

Product Highlights:

- Server Backup, meet business continuity and disaster recovery objectives for any hardware or software in your data center. Recover local machines, convert physical machines into virtual copies and failover to remote warm spares.

- Remote Offices, extend data recovery protection to branch offices and remote locations. Learn how our cloud architecture reduces bandwidth, eases deployment of backup software, and runs in Cisco routers as a backup appliance.

- Virtualized Environments, maximize your virtualization strategy with agentless software certified to run as virtual appliances on VMware, Citrix XenServer and Microsoft Hyper-V.

- Endpoint Devices, protect data on any device that connects to your network, including laptops, desktops, smartphones, tablets and phablets on all leading operating systems and platforms.

- Cloud to Cloud Backup, reclaim control of business information that sits in cloud-based applications and platforms like Salesforce.com and Google Apps. Meet compliance requirements and backup data to authorized, secure data centers.

- Tiered Recovery, why manage older data the same as newer, more critical data? Get a mix of fast and cost-effective recovery capabilities by establishing different Recovery Point and Recovery Time Objectives.http://www.asigra.com/

Actifio Backup & Recovery Solution

Actifio replaces siloted data management applications with an application-centric, SLA-driven approach.

Actifio replaces siloted data management applications with an application-centric, SLA-driven approach.

Production data is captured non-disruptively and in its native form, to make it instantly available when needed. A single physical copy – a “golden master,” kept current through an “incremental forever” model – is used to spawn unlimited virtual copies, across any use case where a copy of production data is required.

Copy Data Virtualization frees increasingly strategic data from increasingly commoditized infrastructure, replacing the many siloed systems you’re using today to protect and access copies of the same production data. It replaces all the software licensing and capital intensive hardware tied up in Backup, Snapshot, Disaster Recovery, Business Continuity, Dev & Test, Compliance, Analytics, and other systems with a single, radically simple approach that does one thing: Make whatever data, from whenever it was created, available wherever you need it.

At the highest level, Actifio lets you do these things :-

- It instantly mount and recover virtual machine from large VM farms

- Manage that data in the most efficient way possible

- Use virtual or physical copies of the data whenever and wherever you need them

- It captures application consistent data with incremental forever back-ups for databases , NAS file systems and VM farms

- It replicate to , and recover in any cloud such as AWS , Azure , Oracle Cloud. Store back-up images in any storage and S3 compatible object storage

- Data is captured with application consistency using native APIs such as Oracle RMAN for Oracle , VSS APIs for Microsoft SQL , VADP for VMware

Commvault Data Protection, Backup and Recovery Overview

Commvault Data Protection and Recovery Solutions Back up your databases, files, applications, endpoints and VMs with maximum efficiency according to data type and recovery profile. Integrate hardware snapshots. Optimize storage with deduplication. Recover your data rapidly and easily, whenever you need to, and leverage reports to continually improve your backup and recovery processes. Commvault software eliminates the separate data silos associated with traditional backup, archive and reporting products — in a vendor neutral solution that frees you from lock-in while reducing infrastructure requirements.

Commvault Data Protection and Recovery Solutions Back up your databases, files, applications, endpoints and VMs with maximum efficiency according to data type and recovery profile. Integrate hardware snapshots. Optimize storage with deduplication. Recover your data rapidly and easily, whenever you need to, and leverage reports to continually improve your backup and recovery processes. Commvault software eliminates the separate data silos associated with traditional backup, archive and reporting products — in a vendor neutral solution that frees you from lock-in while reducing infrastructure requirements.

Enterprise data is anything but uniform. Which is why your data protection, backup and recovery solution needs to cover the full range of data sources, file types, storage media and backup modes — from snapshots to streaming.

With all that diversity, the last thing you want is to maintain a separate point solution for each distinct backup and recovery requirement. With Commvault, you don’t have to. Commvault integrated, automated data protection approach gives you a single, complete view of all your stored data no matter where it is — on-premises or in the cloud.

Commvault works with :

- Databases :- Oracle , Microsoft SQL Server , SAP , IBM DB2 , My SQL and more , our snapshot management solution speeds up recovery and helps eliminate backup-window pressures for large applications that have outpaced streaming back-up techniques.

- Email :- The snapshot management of Commvault makes the possible to search and recover whole applications and mailboxes as well as individual emails , attachments and more.

- Virtual Machines :- Fully integrated with virtualization platforms such as VMware and Hyper-V , Commvault provides the only way to protect hundreds and even thousands of VMs without negatively affecting the productivity.

Amazon S3 Backup and Recovery Solution.

Amazon Web Services (AWS) storage solutions are designed to deliver secured , scalable , and durable storage for businesses looking in achieve efficiency and scalability within their back-up and recovery environments , without the need for an on-premises infrastructure.

Amazon Web Services (AWS) storage solutions are designed to deliver secured , scalable , and durable storage for businesses looking in achieve efficiency and scalability within their back-up and recovery environments , without the need for an on-premises infrastructure.

One can use Amazon S3 to store and retrieve any amount of data, at any time, from anywhere on the web. Amazon S3 stores data as objects within resources called buckets. AWS Storage Gateway and many third-party backup solutions can manage Amazon S3 objects on customers behalf. One can store as many objects as they want in a bucket, and one can write, read, and delete objects in there bucket. Single objects can be up to 5 TB in size. AWS storage solutions allow you to choose in which region your data resides to minimize latency and costs , as well as address regulatory requirements. It gives the complete control of your data. AWS doesn’t require capacity planning , purchasing capacity in advance or any large up-front payments. Anyone get the benefits of AWS storage solutions without the upfront investment and the hassle of setting -up and maintaining an on-premises system.

Amazon Simple Storage Service (Amazon S3) makes it simple and practical to collect, store, and analyze data – regardless of format – all at massive scale. S3 is object storage built to store and retrieve any amount of data from anywhere – web sites and mobile apps, corporate applications, and data from IoT sensors or devices. It is designed to deliver 99.999999999% durability, and has many customers each storing billions of objects and exabytes of data. You can use it for media storage and distribution, as the “data lake” for big data analytics, as a backup target, and as the storage tier for serverless computing applications. It is ideal for capturing data like mobile device photos and videos, mobile and other device backups, machine backups, machine-generated log files, IoT sensor streams, and high-resolution images, and making it available for machine learning to other AWS services and third party applications for analysis, trending, visualization, and other processing.

Amazon S3 offers a range of storage classes designed for different use cases.

Amazon S3 Standard for general-purpose storage of frequently accessed data.

Amazon S3 Standard – Infrequent Access for long-lived, but less frequently accessed data.

Amazon Glacier for long-term archive.

Microsoft Azure Backup Overview

Azure Backup is a simple and cost-effective backup-as-a-service solution that extends tried-and-trusted tools on-premises with rich and powerful tools in the cloud. It delivers protection for customers’ data no matter where it resides: in the enterprise data center, in remote and branch offices or in the public cloud; while being sensitive to the unique requirements these scenarios pose. Azure Backup, now in a seamless portal experience with Azure Site Recovery, offers minimal maintenance and cost-efficiency, consistent tools for offsite backups and operational recovery and unified application availability and data protection. Azure delivers the key benefits :-

Azure Backup is a simple and cost-effective backup-as-a-service solution that extends tried-and-trusted tools on-premises with rich and powerful tools in the cloud. It delivers protection for customers’ data no matter where it resides: in the enterprise data center, in remote and branch offices or in the public cloud; while being sensitive to the unique requirements these scenarios pose. Azure Backup, now in a seamless portal experience with Azure Site Recovery, offers minimal maintenance and cost-efficiency, consistent tools for offsite backups and operational recovery and unified application availability and data protection. Azure delivers the key benefits :-

- Automatic Storage Management

- Unlimited Scaling

- Multiple Storage Options

- Unlimited Data Transfer

- Data Encryption

- Application-consistent Back-up

- Long-term retention

Product Highlights:

- Simple and reliable cloud integrated backup as a service

- Unified solution to protect data on-premises and in the cloud

- 99.9% availability guaranteed

- Reliable offsite backup target

- Efficient incremental backups

- Secure—data is encrypted in transit and at rest

- Geo-replicated backup store

to be continued.